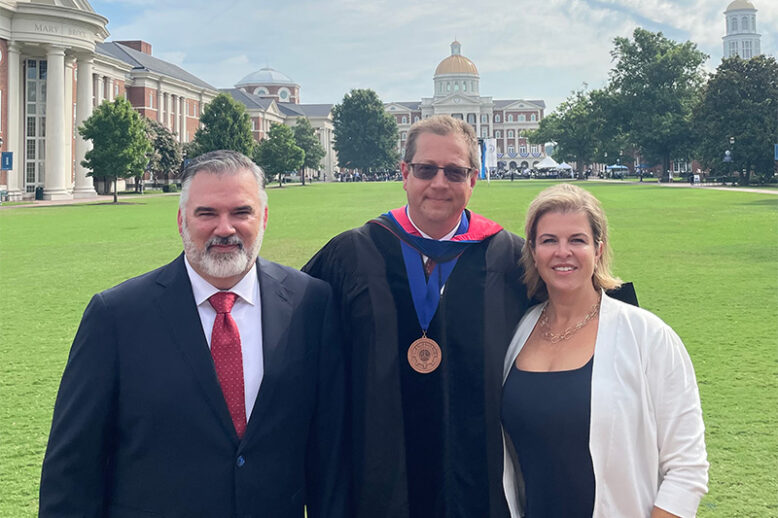

The AI-Powered Future of Military Strategy and Global Dominance

The integration of artificial intelligence (AI) within military strategy signifies a profound evolution in the landscape of global warfare, far surpassing the mere enhancement of existing capabilities. AI is redefining the essence of operational effectiveness and strategic power across the world’s armed forces, heralding a new era where digital intelligence is as critical as physical…